In New Dark Age: Technology and the End of the Future, the artist, writer and technologist James Bridle asks why the technology that defines our everyday lives has not advanced our intelligence and understanding of the world. The book is broken into ten C’s - chasm, computation, climate, calculation, complexity, cognition, complicity, conspiracy, concurrency and cloud. In scrutinising each, Bridle begins to outline a model for living in what he calls the ‘greyzone’. It is an engaging, sharp, and urgent work that takes us well beyond the neo-Luddite fantasies of techno-apocalypse so prevalent in late critiques of technology. In fact, Bridle avoids polarised conclusions of utopia or dystopia, which he identifies as a dangerous part of deterministic computational thinking.1This thinking, he argues, assumes a certain course of events that is linear and inevitable, abdicating us from all responsibility.

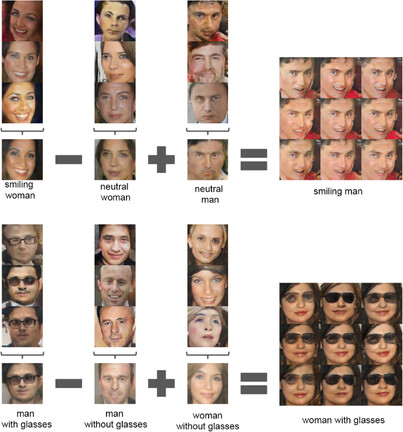

By parsing the bias of computation logic – assuming events and technologies always behave as intended – reflected and amplified in technologies, Bridle instead seeks out a new mode of scientific reasoning with and about decision-making tools; a mode that may produce affinity between human and non-human intelligence and take us towards a future of planetary survival. This, the book makes clear, has little to do with the mastery of technical skills so often proposed as a solution to all manner of problems. Such skills, while necessary for perfecting the attributes of specific technologies, teach us little about the mechanisms governing them. What Bridle terms ‘systemic literacy’ is an understanding of epistemological justifications behind systems design as well as an ability to explore and alter the parameters of those mechanisms in a way that can introduce change to a complex network of systems. We may, for example, no longer understand how high-frequency trading works, but we could still examine and change the conditions under which the algorithmic journalism influences the automated market.

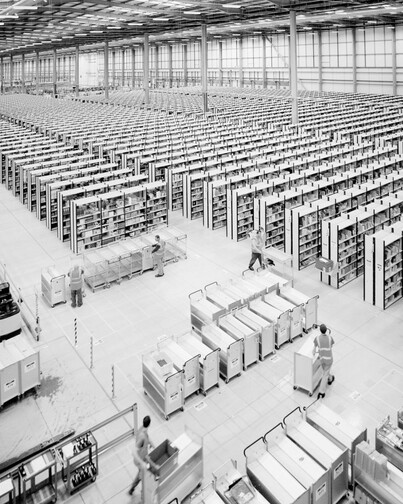

Any answers to the problems produced by this computational confidence, Bridle explains in the first chapter (‘the Chasm’), may only be sought within the incomputable totality and complexity of the network. A personal computer is not an object we carry around, it is one we are living inside of. It is only after accepting that none of us has an unmediated view of the mechanism from the outside, and as such has no way of reducing its complexity, that we might be able to think through uncertainties with non-human intelligence. This is easier said than done. Even more seasoned technology scholars occasionally say things like ‘We must delete Facebook’, or ‘I’m tired of all this media’, without a shred of doubt that there is an off-grid sanctuary where everything can be well.

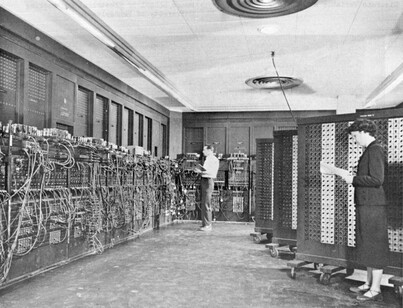

In his chapter on computation, Bridle insists that in order to think through the incomputable – the infinite possibility of risks we cannot account for in advance – we must first accept the extent to which automaton bias influences our reasoning. Even those sensitive to science and well-versed in cultural context of technologies often favour machine decision-making over one based on personal narrative or their own judgment because it is presumed to be easier and faster, two primary markers of success in the age of the monetised self. Unfortunately, it is potentially deadly, not only for the passengers misled by an autopilot, the civilians affected by conspiracy memes or the Amazon workers trapped inside a computational maze, but also for a future we risk being modelled on such automation biases.2 These biases were once posited as discoverable facts under the pledge of extreme secrecy by only a few men with a very limited grasp of their own position in the world eager to win all wars and dominate the planet.

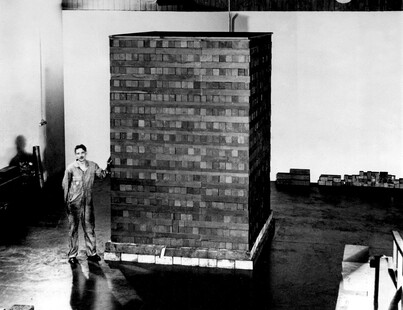

Our faith in our ability to predict, control and regulate machines is bound to turn into a self-fulfilling prophecy, where the past informs and accelerates a particular kind of computational future that is far worse than one Virginia Woolf called ‘dark’.3 In his search for the origin and arrogance of computational thinking, one may have expected Bridle to return to the origins of IBM and its Manhattan project. Instead, in his chapter on ‘the Cloud’, Bridle begins by tracing the way computational ideas reshaped the world, asserting that the ‘story of computational thinking begins with the weather’ (p.20). Lewis Fry Richardson’s aspiration to produce forecasts by making computation faster than weather was eventually solved by the military technologies he himself, as a committed pacifist, disavowed. It is a dark irony that these technologies, mined from the environment and often mimicking nature with an intention to control it,4 are now threatened by global warming, as is our own ability to think, computationally or otherwise. The history of computational thinking may end exactly where it began, with the weather. Carbon dioxide, as Bridle says, ‘Clouds the mind [. . .] The crisis of global warming is a crisis of the mind, a crisis of thought, a crisis in our ability to think another way to be’ (p.75).

In each of the following chapters clouds return in many different configurations as Bridle explores the connections between melting permafrost, high-frequency trading, fake news, deportation logistics, popular conspiracy theories (which Bridle describes as ‘the last resort of the powerless’ and which are no match for the whiff of conspiracy emanating from CIA and NSA surveillance programs), the explosion of pop science and utterly terrifying, algorithmically generated cartoons on Youtube.5

The book is meticulously researched across many disciplines, something made evident by the precision of the references, which range from philosophy, art history and literature to geography, engineering and popular culture. The reading is pleasurable, with a wide cast of supporting characters that range from Egyptian nobility to Hwang Woo-Suk, Edvard Munch and Friedrich Hayek, who guide us through the history of techno-optimism and disappointment, from Greenland to Syria.

Some of the references, such as mention of accelerationism or the existing theories of uncomputable, could have been explained at greater length. Yet, in deliberately avoiding these lengthy tangents, Bridle does justice to the complexity of the subject without resorting to the kind of dense technical passages that may prove overwhelming for readers less familiar with the theory of computational architectures.

It is with this same precision that Bridle addresses a subject still taboo in journalistic circles today: the inflated value of media technologies as tools for informing, revealing, and empowering. The idea that if only people were fully informed, or the voiceless had a platform something would change has been irrevocably compromised by last decade revelations of state and government secrets, made easier by technologies. According to Bridle, these leaks have not shifted the balance of power, nor improved the way we understand ourselves and our complicity within the whole. The information made available in far larger quantities that was ever possible is fed back into the same hungry circuits buzzing with Pepe the frog, dead babies and kittens, all of equal value for the algorithm.

The same erosion of trust in journalism hangs like a dark cloud over science and academia. And the worst of it, Bridle points out is not that scientists and academics are deliberately trying to deceive people, but that they do so unconsciously thanks to the combination of complex factors such as ‘institutional pressures, lax publishing standards, and sheer volume of data available’ (p.91).

In 1985, Marguerite Duras said of the dark age we live in now: ‘I believe the men will drown in information’. She was right. We are drowning. For all of us who want to swim and think and act in this ocean, without any pretense that we will ever wield the future or reach the shore, The New Dark Age is a stellar companion.

Footnotes

- For a problematisation of computation logic and a definition of in incomputable, see P.E Agre: ‘Toward a Critical Technical Practice: Lessons Learned in Trying to Reform AI’, in G. Bowker, L. Gasser, L. Star and Bill Turner, eds. Bridging the Great Divide: Social Science, Technical Systems, and Cooperative Work , Erlbaum, 1997. See also the work of Luciana Parisi, Lev Manovich, Matthew Fuller, Andrew Goffey, Beatrice Fazi, Inigo Wilkins and James Trafford. footnote 1

- This phenomenon is known as automation bias, and it has been observed in every computation domain from spell-checking software to autopilots, and in every type of person. Automation bias ensures that we value automated information more highly than our own experiences, even when it conflicts with other observations –particularly when those observations are ambiguous. footnote 2

- ‘The future is dark, which is the best thing the future can be’, V. Woolf: A Writer's Diary: Being Extracts from the Diary of Virginia Woolf, ed. L. Woolf, London 1953. footnote 3

- See, for example, J. Parrika: Insect Media: An Archaeology of Animals and Technology, Minnesota 2010; and J. Crary: 24/7: Late Capitalism and the Ends of Sleep, London 2014. footnote 4

- See, for example, ‘Wrong Heads Disney Wrong Ears Wrong Legs Kids Learn Colors Finger Family 2017 Nursery Rhymes’, YouTube ,accessed 24th January 2019. See also ‘Disney Cars Surprise Eggs with Limited Edition McQueen’, YouTube , accessed 24th January 2019. Set to autoplay to see the extent of variations that follow. footnote 5